With almost 12 billion searches being made on Google (and that’s just a portion of the market) for content, products, and services on Google each year, it’s clear how search engine optimization could reap rewards for most any business.

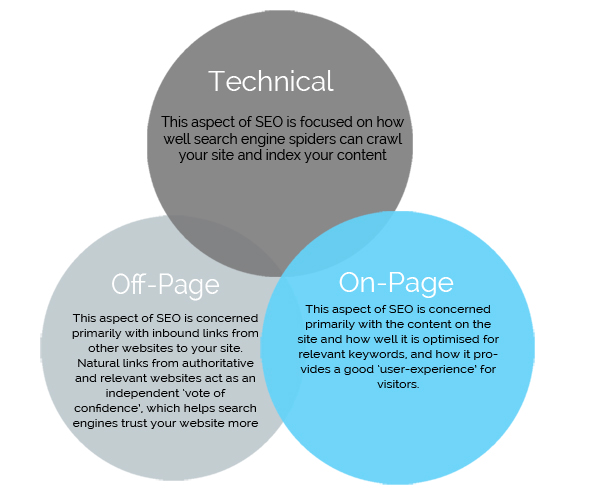

Where in the pecking order a site or page is displayed in search results is dependent on a number of factors, which can generally be grouped into three categories: Technical, Off-Page, and On-Page.

On-page SEO is largely concerned with content, such as blog posts, and how well it is optimized. Technical SEO is largely concerned with how well a sites code can be crawled and index by search engines. Off-page SEO largely relates to backlinks from other websites to your site.

I feel I’m pretty strong on creating high quality content (and the audit below confirmed that), however I wanted to make sure I was doing on-page optimization as best as possible and make sure I wasn’t doing anything wrong on the technical side that might cause Google to downgrade my site.

Before getting this audit, I didn’t know exactly what I should be looking for, nor how to do it, so I wanted to get someone who really knows what they’re doing to give me an unbiased opinion.

So I hired Jason White to do an SEO audit of my site.

Jason White is VP of SEO and SMM at DragonSearch, a digital marketing agency. A keyboard jockey by day, Jason dreams about epic rides through high mountains which usually end at a swimming hole with burgers and a good beer. Jason has spoken at SMX, NYU, WordCamp and has work spread across print and digital. You can find Jason via Twitter or on the DragonSearch blog.

Here are some of the most actionable and high leverage items from the outstanding report he delivered to me. I think you’ll find some ways to improve your sites technical and on-page SEO, as well as some general content marketing and website usability best practices. I’ll be implementing most of these suggestions and I think it will help me gain more traffic from google as well as improve the experience for readers.

Goal of an SEO Audit

When auditing a website, it’s always important to first start with the goal. Does the website sell products or services? Is it a content hub? Are we trying to earn whitepaper downloads or newsletter signups? Shaping an audit with the purpose of the website in mind seems like a simple thing but it’s often missed.

The approach to any Search Engine Optimization campaign should incorporate many on-site and off-site activities that help to form a well-optimized digital property. Optimal positioning is achieved by the methodical application of SEO best practices, combined with website usability and functionality, as well as social signals to the highest degree possible. Customized audits will forever trump cookie-cutter or automated auditing process because the auditor can review the website with an objective mind.

Organic SEO is a long-term strategy that takes time before benefits are seen. A holistic approach expands beyond traditional SEO and will not only ensure that the website is findable and accessible for indexation by search engine spiders but will also help improve your overall digital footprint, the website’s authority, its relevancy, and the overall user experience.

1. SEO but with Usability Top of Mind

When we review websites, we pay close attention to first impression and usability. SEO is not the only consideration for an effective marketing strategy or website. It’s extremely important that the users are able to navigate the site easily and find the information they need. We don’t market to search engines, we market to real users as they have the cash to buy our products. While there are hundreds of ranking factors, at the core, Google is interested in serving the best experience for their query. When we keep usability top of mind and offer a rich experience, the search engines will follow.

Top Recommendations as they relate to your site:

- Create a navigational and sub-navigational structure to your website.

- Products and contact information should be included on these pages.

- We want to make it easy for users to find and consume the information they’re looking for.

- Consider using a program other than ConvertKit for your email marketing and eBook sale efforts.

- ConvertKit automates some processes but in doing so, takes away control from your overall branding, makes tracking success much more difficult and takes away some important aspects to technical SEO.

- Implement responsive design to offer mobile and tablet visitors a better experience.

- As a rule of thumb, if less than 15% of your traffic is coming from mobile devices, it’s ok to wait but continue to track. 15-30% mobile traffic, start thinking about a responsive or dedicated mobile website. 30-50%, it’s time to implement! More than 50% of your traffic is on a mobile device? You aren’t serving your users a quality experience.

- Utilize all of the tracking features available in Google Analytics – site search, demographics, conversions & event tracking, syncing Google Analytics with Google Search Console.

Navigation

The website features a fantastic quantity of rich content. The posts themselves are wonderfully written with an average of around 1,000 words per piece. Navigating to this content, viewing categories and finding the numerous ebooks is challenging because there is no main or sub navigation offered.

Users can find a handful of featured ebooks in the website’s footer which ultimately take the user “off site” to a ConverterKit page:

Users can find category and tag pages via links at the end of each blog post:

While the lack of navigation is aesthetically pleasing, this is far from ideal. Users want to quickly understand what topics a blog or website cover so that they can quickly drill down to relevant topics. A lack of navigation limits the lifespan of content and doesn’t offer the ability to show off the impressive series of ebooks which have been created.

A lack of navigation negatively impacts how search engines crawl and understand the website’s structure. When pages or blog posts are multiple clicks away from the homepage, it’s interpreted as content that is less relevant or not as important as compared to others. Navigation allows for structure and lowers the amount of clicks needed to find important pages/content.

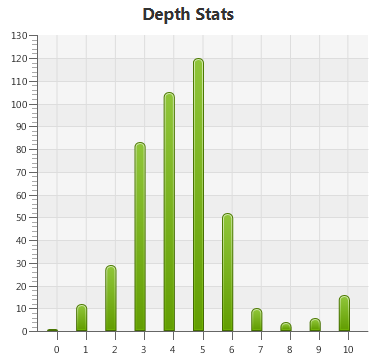

Ideally, we’d like to see pages approximately 3-5 clicks from the homepage. Currently, this is how click depth is mapped out:

Recommendations:

- Add navigational elements to the website and if wanted, work with a graphic designer to keep to the clean appearance.

- Add pages for About, Featured Work, each EBook and the Blog. The blog should contain sub navigation to the category pages.

2. Canonicalization

A canonical tag allows you to set a preferred URL for your content. Specifically, a “link rel=canonical” element of code is placed in the top of a web page to identify a specific URL that is the original source of the content on the page. If another site copies your content, this bit of code tells search engines which page is the original. It is also very valuable to address various existing and potential duplicate content issues on the site as it takes some guesswork away from the search engine..

- A canonical tag has been implemented throughout the website with five pages missing a canonical tag all related to ebooks:

- These pages are not on WordPress but are instead found on ConvertKit.

As categories and tags get populated with new content, content management systems will add page numbers to the URL string. This can happen in other instances as well and is known as pagination and can be interpreted by search engines as duplicated content. We currently see this in your tag and category structure:

- https://mfishbein.com/tag/venture-capital/page/3/

- https://mfishbein.com/category/entrepreneur/page/3/

To give clear signals to the search engine that the resource or page is part of a series of pages, rel=prev and rel=next should be used. The tag pages contain the correct rel=prev, rel=next tag as seen below but the category pages are missing this tag.

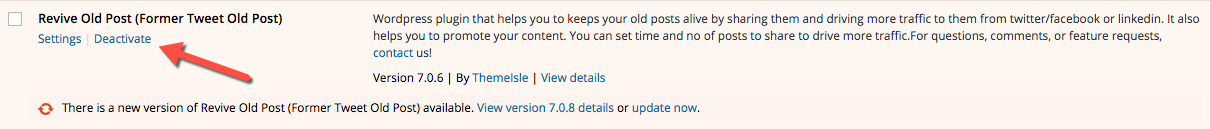

I was not able to find any examples of duplicate content which was indexed but I did find duplicate content via a plugin called Revive Old Post which is a tool used to syndicate older content on social media. The issue is that it is promoting content to a subdomain which is splitting equity away from the main website while creating a duplicate content risk.

- http://new.mfishbein.com/amazon-policy-selfpublished-authors/ is a duplicate of: https://mfishbein.com/amazon-policy-selfpublished-authors/

- http://new.mfishbein.com/ is a duplicate of: mfishbein.com/

Further, these subdomains have canonical tags pointing back to themselves which could create further confusion for search engines should one of these pages get indexed.

Recommendations:

- Deeper strategy needs to be determine to understand why eBook pages live on another web property. Ultimately, these are money pages and need to be protected and built up.

- I recommend building these as WordPress pages and not hosting them on ConvertKit as it will provide you with enhanced tracking, greater flexibility and a better user experience.

- Using a different service such as MailChimp, will give you greater control over your brand messaging, tracking capabilities and user experience.

- Add a canonical tag to the pages that are missing the tag.

- Stop using Revive Old Post and/or fix the duplicate content issue it’s creating.

- Add rel=prev/next tags to category pages of the blog. This can be done via Yoast.

3. Accessibility and Spiderability

It is important that website content is accessible and visible to the search engines. When a search engine spider crawls a website it is not able to see it the way humans do. If these spiders are repeatedly finding missing pages, redirects or other bad HTML site code, it’s likely you’ll be viewed less favorably with search engines.

Indexed Pages

- Google is showing the following approximate number of pages indexed:

-

-

- ~323 indexed pages found in the Search Engine Results Pages

- ~265 indexed pages found in Google Webmaster Tools

-

- This ratio is acceptable but could be improved.

Crawl Errors

- https://mfishbein.com/ currently has:

-

-

- 5 “404 Page Not Found” errors

-

Recommendations

- Review the website routinely and remove and/or repair any broken links that come up site-wide.

- Any time a page is removed from the website, a 301 redirect should be set up to improve usability and limit the errors search engine spiders receive when trying to crawl the website. A 301 redirect signals that the page has moved to a new page permanently and helps transfer any value the old page may have accrued over time.

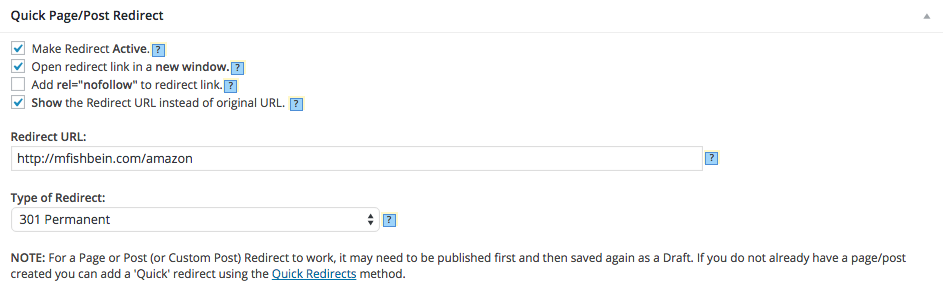

Mike: The Quick Page/Post Redirect Plugin along with the Pretty Link Plugin can be used to implement this.

XML Sitemap & HTML Site Map

XML Sitemaps are feeds designed for search engines; they’re not for people. They are lists of URLs with some optional meta data about them that is meant to be spidered by a search engine. XML Sitemaps are especially useful for very large sites but every website made can benefit from a clean, accurate sitemap. They help improve spiderability and ensure that all the important pages on the site are crawled and indexed. Sitemaps give the search engines a complete list of the pages you want indexed, along with supplemental information about those pages, including how frequently the page is updated. This does not guarantee that all pages will be crawled or indexed but it helps feed the search engine and take away guess work.

- The XML sitemap is found here: https://mfishbein.com/sitemap_index.xml

- The sitemap is created dynamically via the Yoast plugin and offers structure because posts, pages, categories and tags each have a unique sitemap.

- Your XML sitemap is up to date and contains the same 404 errors previously reported.

- Your XML sitemap has not been reported to the search engines.

An HTML site map is a simple html page that very clearly outlines the site’s hierarchy and how content is organized within it. It is a list of text links to each page. The HTML sitemap is useful for both human visitors and can help search engine bots verify the validity of your XML sitemap.

- An HTML sitemap could not be found.

Recommendations

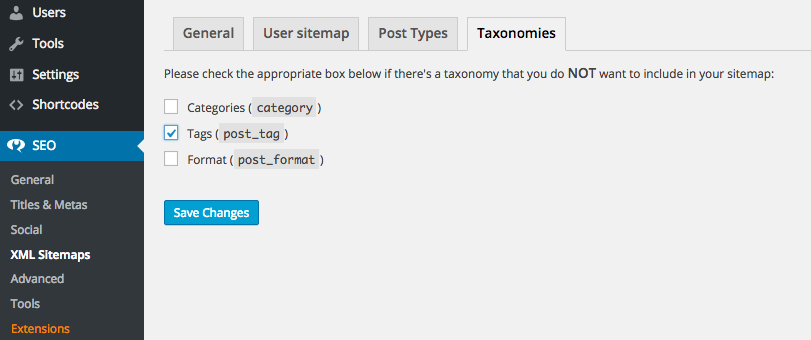

- Remove the XML sitemap for Tags. Currently, your tags are correctly ‘no indexed’ which indicates to search engines that we’d prefer to not have this content indexed. Having a sitemap for pages which are ‘no indexed’ can create confusion for the search engine. You can do this via Yoast; SEO > XML Sitemaps > Taxonomies > select Tags

- Create an HTML site map that is organized based on the website’s navigation structure and is clearly accessible, linked to from the site’s footer.

- Submit each sitemap to Google Webmaster Tools as well as Bing.

Site Coding

The HTML Code is the building block of a website. The search engines ‘crawl’ through this code to determine what the site is about, and to evaluate the relevance and value of the site in relation to others on the internet.

- Your website’s code overall is clean. However, there are opportunities to improve it even further.

-

-

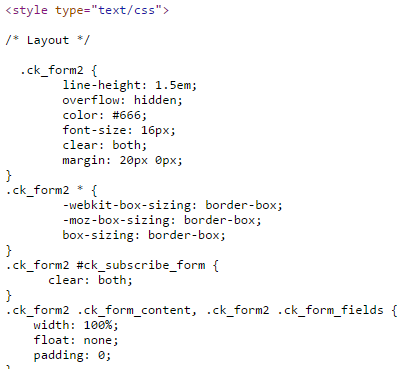

- Throughout the code there are a number of instances of inline CSS and JavaScript. Instead all CSS and JavaScript should be combined into respective external files.

- Here are a few examples of inline CSS and JavaScript:

-

- It is important to note that CSS should be referenced before an external JavaScript file in the document head.

Building a site with well-developed information architecture and clean “spider-friendly” code will ensure proper crawling and indexation of the entire site. Search engine crawlers have a character or time limit they can spend on the site and at the same time, the spiders are getting smarter everyday and can understand complex experiences and actions. Keeping clean code and allowing the spiders to crawl the code will limit issues with search engines while making good use of the crawl budget.

Recommendations

- All JavaScript should be kept in external files to keep the code clean and obstacle free for the search engine crawlers. Furthermore, remove all unnecessary JavaScript from the code.

- Overall your website’s code is very clean. The benefit of any recommendations would likely be outweighed by the development costs associated.

4. Site Speed

In addition to crawlability, having clean and proper coding is very important so that the site loads quickly.

Site speed has been an increasingly important factor for successful websites, not just for visitors who lose their trust when sites are slow or don’t load, but also for search engines that have incorporated it as a ranking factor.

As an example of the importance of site speed, Amazon’s case study showed that an extra 1 second in load time cost them a 10% loss in conversions.

Site speed is cumulative, so ensuring that all the factors that affect load time are looked at, tested and improved on is important. It’s important to weigh the perceived benefits of improving website speed with the associated costs. Often, a cost to benefit analysis will show that the costs might outweigh the benefit

- Your site’s average load time:

-

-

- First view 18.8 seconds

- Repeat View: 18.7 seconds.

- Google typically recommends a load time of 1.5 to 2 seconds.

-

- Google’s page load time score:

-

-

- Desktop: 82 out of 100

- Mobile: 65 out of 100

-

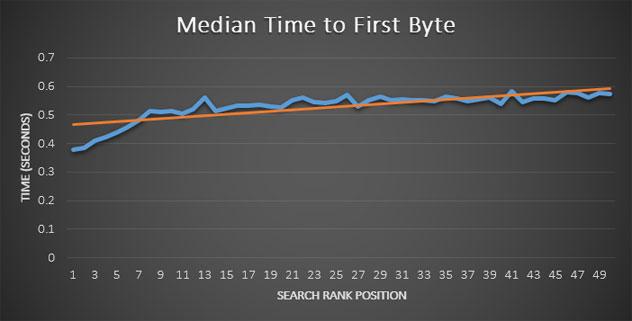

When considering speed factors that directly affect the ranking algorithm, the time it takes to load the first byte of data is key. Sources on Moz.com reports that “a clear correlation was identified between decreasing search rank and increased time to first byte”. The study shows that a jump between .38 seconds and .49 seconds represents an average drop of position 1 to position 7. Overall, an ideal target of less than .4 seconds for the first byte of data is the recommended benchmark.

A speed check on WebPageTest.org of www.mfishbein.com showed a 0.474 second delay before the first byte was loaded.

Recommendations:

- Increasing website speed is another recommendation where the benefit can outweigh the cost. It’s noted in this audit as something to keep in mind for future website changes.

- Eliminate render-blocking JavaScript and CSS in above-the-fold content

- Reduce server response time

- Leverage browser caching (this plugin can help)

- Prioritize visible content

- Minify CSS

- Optimize images

- Minify HTML

- Ongoing monitoring of these and other factors that can help with your site speed – it is not a ‘set and forget’ type of activity.

5. Mobile

Due to the increased use of mobile devices for internet browsing, mobile compatibility must be considered. Any website that you view on your desktop should also be easily accessible on a mobile device.

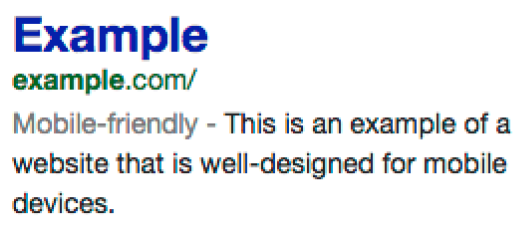

In November 2014, Google announced the introduction of Mobile Friendly Labels in Mobile Search Results. The update, features mobile friendly websites in the search results.

Expanding upon this emphasis on mobile results, Google has revealed that they have updated their algorithm on April 21st , 2015- expanding mobile-friendliness as a ranking signal. The aim for this update is to make it easier for users to find relevant, high quality search results that are optimized for their device. Since the rollout of the update, it appears that largely, this update was a lot of hype. This isn’t to say that the update wasn’t important.

- The https://mfishbein.com/ website is not responsive or mobile friendly. On the left is how GoogleBot sees your website and on the right is the user’s view:

Over the last 30 days, 31% of your website’s traffic is from a mobile devices or tablet. This is a significant percentage that is likely to increase with time.

- Mobile users, on average, spend the least amount of time on site, viewing less pages while having the highest bounce rate which signals lost opportunity in offering a solid user experience.

Recommendations:

- There are numerous plugins available which will make your website responsive. Responsive websites automatically resize based on device type offering users the best experience while pleasing search engines.

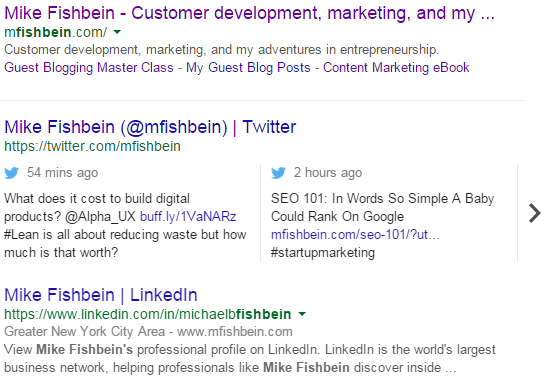

6. Schema Markup

Schema markup is a collaboration between the major search engines that, through a specific markup, helps them to better understand the information on a webpage. This makes it possible to provide richer results on the search engine results pages often seen in the form of additional information, links to social accounts or a star review appearing under the title tag. Schema markup enables webmasters to embed structured data on their web pages for use by search engines and other applications.

The Schema vocabulary describes a variety of item types so that when a user searches for a phrase that relates to one of these item types (i.e. product, review, person, event, etc.) additional information can be displayed in the organic results on the search engine results page.

There has been a large debate regarding the usefulness of Schema Markups and their user facing results named Rich Snippets. Regardless of how Google visually interprets schema markups, schema remain a vital way to ensure that search engines can successfully interpret and understand the information and connectivity of relationships found on your website.

- Google Webmaster Tools reports 413 markedup items found on 100 pages. There are no errors reported.

- A manual review shows that there are individual pages missing schema for:

- datePublished

- headline

- image

- Social Schema – markups that allow content to be displayed in the correct format on social media channels such as Twitter, Facebook and LinkedIn are being used and are correct.

Recommendations

- There are numerous markups that could be applied to your pages. Raven Tools offer a Schema Creator which will give readers a fantastic head start on what to markup and how to do so.

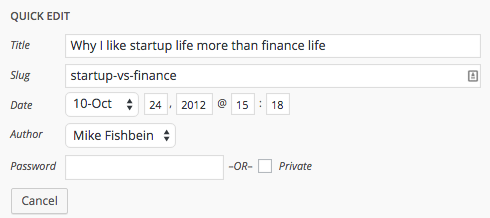

7. URL Structure

Having descriptive URLs with keywords can help with building relevancy for the pages and help with user click-through rates, as the keywords will suggest the content of the page and thus its relevancy.

Parameters, delimiters and special characters can make it difficult for the search engines to understand them, which may cause fewer pages to be indexed. In addition, they are very user unfriendly.

The real secret with URLs is consistency in the structure across all URLs. If the URL includes hyphens, ends in a trailing slash or .html, they should be consistent across the site.

URLs with and without a trailing slash or mixed capitalization can be seen by the search engines as two different URLs pointing to the same content, thus causing duplicate content concerns.

- The current URL structure is strong. The URLs are all lowercase, include hyphens, and end in a trailing slash.

- There are a few examples where hyphens are not used:

- And a few examples where the URLs could be more descriptive:

Recommendations

- URLs should be continue to be consistent and include hyphens to separate words and no capitalization.

- Before crafting URLs, consider how they could be written so that they better describe the page’s content.

8. Content

Content is the life blood of search engine optimization, requiring not only a keyword focus but also, a relevancy of the topics a website covers

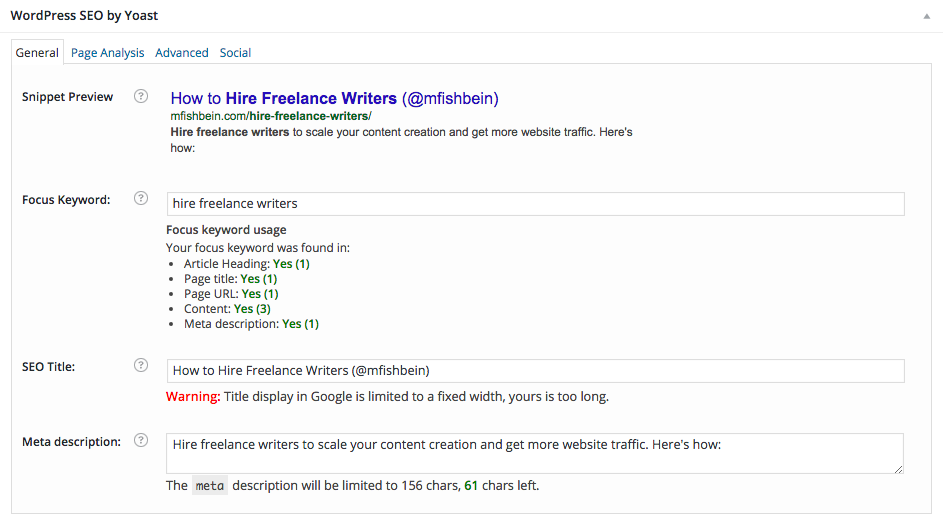

Below is a screenshot of how your website is displayed in search results. It is important for the titles and descriptions of your pages to accurately reflect the content of each page.

Title Tags

Title tags are your front line of offense as they will slow the user’s scroll of the results and help convince them your answer is the best match for their query

Titles should be keyword-focused, unique for each page of the site, include branding, and be descriptive about the content of the page to entice searchers to click through.

Aim to use between 55 and 64 characters as this gives you the opportunity to stand out and encourage searchers to visit your site over the other results in the SERP.

- Out of 438 page reviewed, all have title tags.

-

-

- 37 Are duplicated but these are all for paginated pages and can be ignored.

- 65 are over 65 characters

- 83 are below 30 characters

- 151 are the same as the H1 tag

-

Recommendations

- Titles tags should be manually created and reviewed for the important pages/posts of the website; improvements should be implemented that include a keyword focus to ensure they are enticing, and relevant to that page’s content.

- While there is no need for counting exact number of characters, the length of the titles should be around the available ~55 characters, or a width of 482 pixels if you really want to nerd out on your SEO, to ensure they don’t get cut off when displayed in the search engine results.

Mike: This keyword to use can be determined via Google Keyword Planner and implemented via the Yoast Plugin.

Meta Tags

Meta Descriptions do not increase search result ranking, but are a main factor in attracting users to click through to your website in the search results. They appear under the title in the search engine results pages (SERPs), so it is your second most important marketing tool following the title tag.

A well written and inviting meta description will entice users to visit your website. Meta descriptions should be around 155 characters long and crafted using keyword research but, at the same time, appealing to the searchers so they want to click through.

- Meta descriptions are missing on 372 pages.

- 1 page has a meta description which is over 156 characters

- 70 pages have a description which are below 70 characters.

Recommendations

- Create unique, enticing meta descriptions for all pages of the website with a strong call to action in a tone that speaks to your readers.

- As with many other elements on a website, you may want to do some testing with different titles and descriptions to see how they affect your visibility and click-through rates.

Copy

Body copy helps search engines determine the page’s relevance so it is important to have copy about the target topic on the page. While it is important to use keywords strategically throughout the copy, it’s more important to write good quality content that speaks to your target audience by using rich natural language with synonyms and related phrases. Stuffing your website with keywords, however, can be perceived as spam by the search engines which will ultimately affect your rankings while resulting in a bad user experience.

- Your content is excellent and on point.

- There were 32 images found on the site and no videos. Creating content beyond the written word should be explored as it will add to the depth of the website while providing other opportunities.

Headings

Header tags help structure content and lay out the hierarchy as well as break up content into more digestible blocks for users while providing search spiders hints as to what the page is about. Using Heading tags on the Home Page for your main content is a best practice not only for SEO reasons but also editorially to clearly communicate and structure the content hierarchically. In addition, as the Heading element’s inherent meaning is to define what the topic and sub topics of the content are, it is an important element for ‘semantic closeness’ to the rest of the copy (how semantically relevant your content is to the heading), which search engines use to analyze and understand content.

- The use of Header tags is overall acceptable.

- I recommend updating the H1 tag found on the homepage from its current branded phrase of ‘Mike Fishbein’ to something more descriptive.

- H2 tags are found but other supporting headers such as H3 and H4s are not found.

Recommendations

- Differentiate your header tags; a common rule for longer posts is as follows:

- 1 H1 tag on each page

- 3-4 H2 tags on a page depending on content length

- 3-5 H3 and H4 tags as needed to support the content

Images

Optimizing your file names, including images, is an important part of on-site optimization. Well-optimized images not only support the optimization efforts on the pages but they can also rank on their own in image search and drive traffic to the site.

The “alt” attribute specifies an alternate text for user agents and should be used to comply with the Disabilities Act Section 508. Search engines are the ‘most disabled’ users who will ever come your website and cannot see images so using keywords in the file names, and especially in the “alt”, will help them know how to index the image.

The “title” attribute offers advisory information about the element for which it is set. It is not indexed by the search engines however the “alt” attribute can be supplemented with a “title” if it provides value to your users.

- Of the 32 images found:

-

-

- 3 are over 100 kb

- 9 are missing alt text

-

- Your images could be improved by using descriptive file names. Using lower case letters and dividing words with dashes is a best practice.

- Images are indexed and showing up in Google Image search.

- While most of the images contain alt text, they could all be improved with more descriptive text. The ‘jail bars’ image found on the Jail blog post contains this alt text:

- Logos found on the homepage contain this alt text:

Recommendations

- Use more meaningful, descriptive keywords in the image file names, captions and alt attribute.

- Use meaningful, descriptive keywords in the Alt attribute of the images and make sure all product images have one.

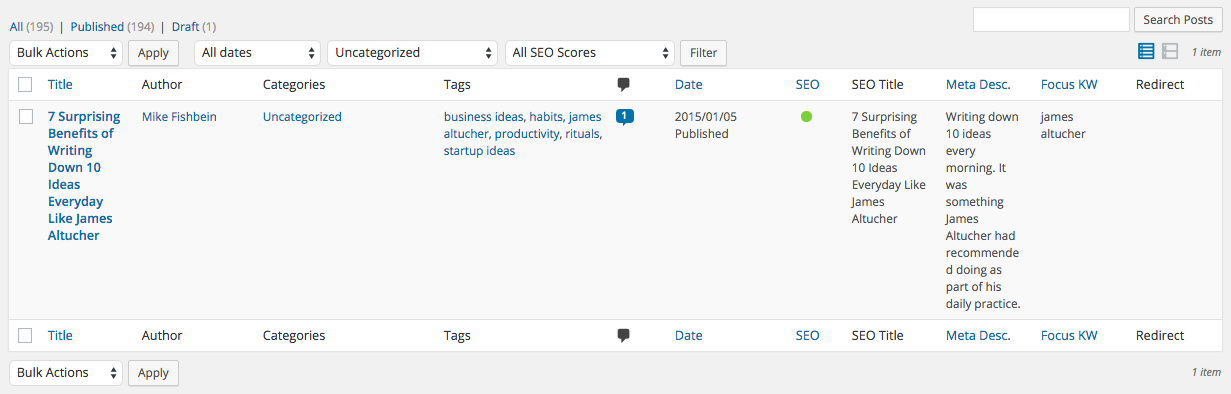

Blog Categories

Recommendations

- Consider removing “Uncategorized” and moving the one post that is found to another category.

- Consider updating the current categories so that they have more descriptive names and aren’t abbreviated.

- Keyword research should be utilized to maximize the impact of the blog..

- Focus your blog content on connecting with your audience by writing about topics they are passionate about and that solve their problems.

- Author pages should be added for each blog writer. The author pages should include an image, a brief bio that includes links to the author’s contact and social networks, as well as provide a list of posts by the author.

- Once author pages are created, link the author’s Google+ profile to the website to implement Rel=Author for specific pages on the site. Up until recently authorship markups, a type of schema markup, made your search results stand out via a profile image which lead to increases in click through rates when displayed on a SERP. Google has stopped offering this as a benefit. Nevertheless, the authorship markup remains a vital way to ensure that search engines can successfully interpret and understand the information found on a website. More information can be found in the Schema Markup Section.

9. Google Analytics

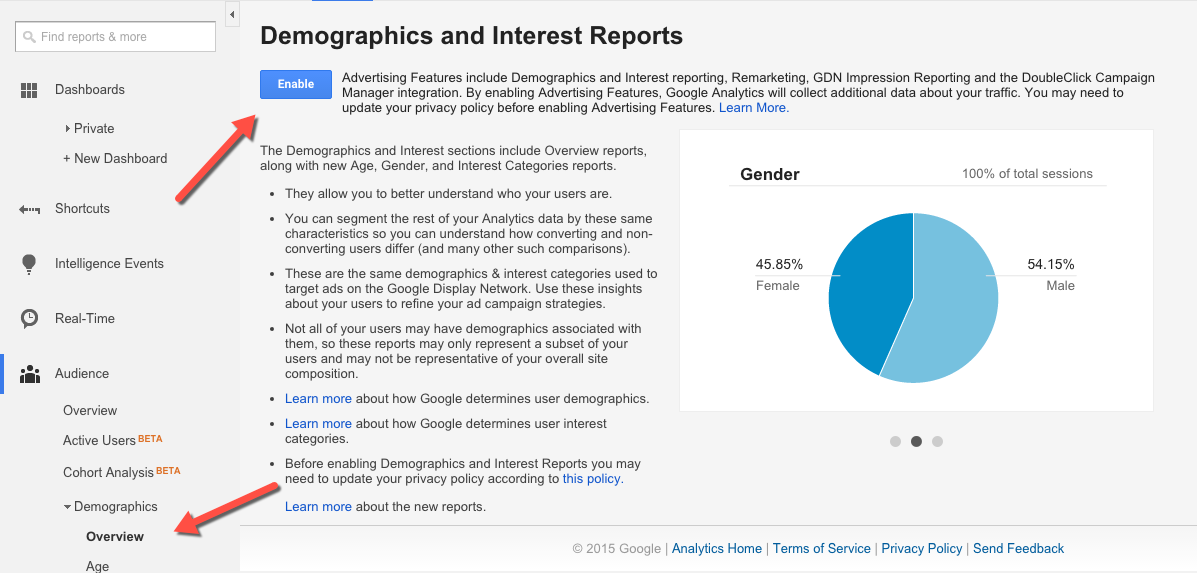

Demographics & Interest Reports

Demographics and Interest reports allow you to better understand who your readers are by collecting information such as age, gender, and interests.

- Demographics & Interest reports are not enabled.

Google Search Console (formally Webmaster Tools)

Google Search Console allows Google to send troubleshooting sdata, site metrics and communications to webmasters who install it while providing other useful data and site statistics. When connected to Google Analytics, the Search Console provides richer data.

- Search Console is not currently connected to Google Analytics. This can be done via the Admin panel.

- Bing has Webmaster Tools and you should consider establishing an account as it gives additional data that Google doesn’t offer.

Site Search

Site Search is currently found on your 404 page. As a best practice, it should be enabled site-wide to allow searchers to easily find specific topics or posts. When enabled, the queries that users search for can be tracked. This can help identify gaps in content or navigational issues.

- Site Search is not currently enabled.

Mike again: In conclusion, there are clearly many ways I can improve on my on-page SEO (and you could improve yours). But, as noted, some of these small changes won’t make that much of a difference in the long run and will take a lot of time to implement.

Even if I make all of these changes, my ranking may improve, but without amazing content that provides value to people, no one will want to come to my site or stick around. So, I will definitely be implementing many of these recommendations, but remember there’s not such thing as a free lunch and creating valuable content must be a part of any content marketing or SEO strategy.